Making Myself Obsolete

— Writing a Linter for Linting Linters

Source: Paleontologists once thought it had a brain in its butt.

In December 2015 I was looking for static analysis tools to integrate into trivago's CI process. The idea was to detect typical programming mistakes automatically. That's quite a common thing, and there are lots of helpful tools out there which fit the bill.

So I looked for a list of tools...

To my surprise, the only list I found was on Wikipedia — and it was outdated. There was no such project on Github, where most modern static analysis tools were hosted.

Without overthinking it, I opened up my editor and wrote down a few tools I found through my initial research. After that, I pushed the list to Github.

I called the project Awesome Static Analysis.

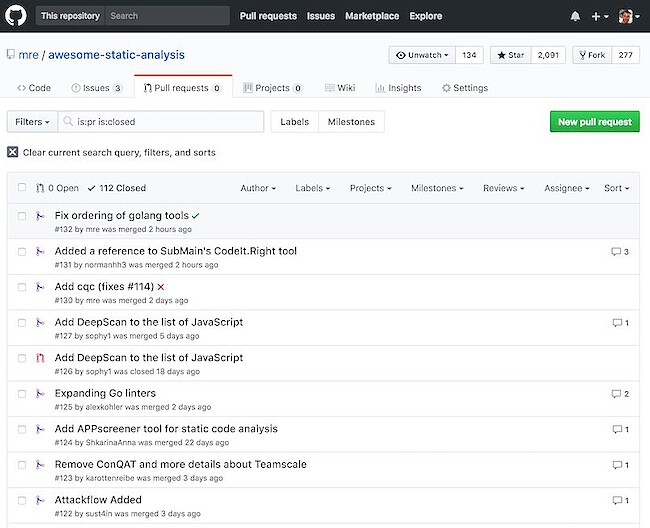

Fast forward two years and the list has grown quite a bit. So far, it has 75 contributors, 277 forks and received over 2000 stars. (Thanks for all the support!) (Update May 2018: 91 contributors, 363 forks, over 3000 stars)

Around 1000 unique visitors find the list every week. Not much by any means, but I feel obliged to keep it up-to-date because it has become an essential source of information for many people.

It now lists around 300 tools for static analysis. Everything from Ada to TypeScript is on there. What I find particularly motivating is, that now the authors themselves create pull requests to add their tools!

There was one problem though: The list of pull requests got longer and longer, as I was busy doing other things.

Adding contributors

I always try to make team members out of regular contributors. My friend and colleague Andy Grunwald as well as Ouroboros Chrysopoeia are both valuable collaborators. They help me weed out new PRs whenever they find the time.

But let's face it: checking the pull requests is a dull, manual task. What needs to be checked for each new tool can be summarized like this:

- Formatting rules are satisfied

- Project URL is reachable

- License annotation is correct

- Tools of each section are alphabetically ordered

- Description is not too long

I guess it's obvious what we should do with that checklist: automate it!

A linter for linting linters

So why not write an analysis tool, which checks our list of analysis tools! What sounds pretty meta, is actually pretty straightforward.

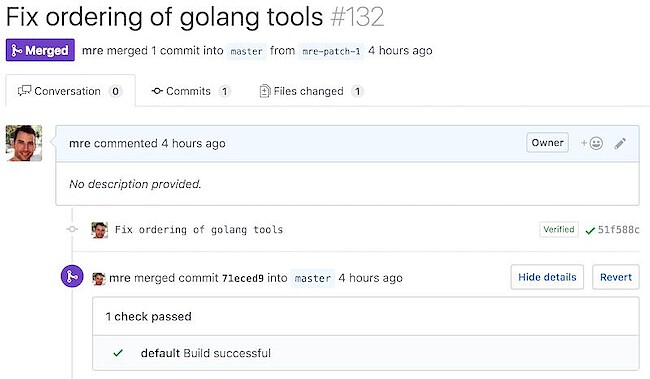

With every pull request, we trigger our bot, which checks the above rules and responds with a result.

The first step was to read the Github documentation about building a CI server.

Just for fun, I wanted to create the bot in Rust. The two most popular Github clients for Rust were github-rs (now deprecated) and hubcaps. Both looked pretty neat, but then I found afterparty, a "Github webhook server".

The example looked fabulous:

extern crate log;

extern crate env_logger;

extern crate afterparty;

extern crate hyper;

use ;

use Server;

This allowed me to focus on the actual analysis code, which makes for a pretty boring read. It mechanically checks for the things mentioned above and could be written in any language. If you want to have a look (or even contribute!), check out the repo.

Talking to Github

After the analysis code was done, I had a bot, running locally, waiting for incoming pull requests.

But how could I talk to Github?

I found out, that I should use the Status API and send a POST request to /repos/mre/awesome-static-analysis/statuses/:sha

(:sha is the commit ID that points to the HEAD of the pull request):

{

"state": "success",

"description": "The build succeeded!"

}

I could have used one of the existing Rust Github clients, but I decided to write a simple function to update the pull request status code.

You can see that I pass in a Github token from the environment and then I send the JSON payload as a post request using the reqwest library.

That turned out to become a problem in the end: while afterparty was using version 0.9 of hyper, reqwest was using 0.11. Unfortunately, these two versions depend on a different build of the openssl-sys bindings. That's a well-known problem and the only way to fix it, is to resolve the conflict.

I was stuck for a while, but then I saw, that there was an open pull request to upgrade afterparty to hyper 0.10.

So inside my Cargo.toml, I locked the version of afterparty to the version of the pull request:

[]

= { = "https://github.com/ms705/afterparty" }

This fixed the build, and I could finally move on.

Deployment

I needed a place to host the bot.

Preferably for free, as it was a non-profit Open Source project. Also, the provider would have to run binaries.

For quite some time, I was following a product named zeit. It runs any Docker container using an intuitive command line interface called now.

I fell in love the first time I saw their demo on the site, so I wanted to give it a try.

So I added a multi-stage Dockerfile to my project:

FROM rust as builder

COPY . /usr/src/app

WORKDIR /usr/src/app

RUN cargo build --release

FROM debian:stretch

RUN apt update \

&& apt install -y libssl1.1 ca-certificates \

&& apt clean -y \

&& apt autoclean -y \

&& apt autoremove -y

COPY --from=builder target/release/check .

EXPOSE 4567

ENTRYPOINT ["./check"]

CMD ["--help"]

The first part would build a static binary, the second part would run it at container startup. Well, that didn't work, because zeit does not support multi-stage builds yet.

The workaround was to split up the Dockerfile into two and connect them both with a Makefile. Makefiles are pretty powerful, you know?

With that, I had all the parts for deployment together.

# Build Rust binary for Linux

docker run --rm -v $(CURDIR):/usr/src/ci -w /usr/src/ci rust cargo build --release

# Deploy Docker images built from the local Dockerfile

now deploy --force --public -e GITHUB_TOKEN=${GITHUB_TOKEN}

# Set domain name of new build to `check.now.sh`

# (The deployment URL was copied to the clipboard and is retrieved with pbpaste on macOS)

now alias `pbpaste` check.now.sh

Here's the output of the deploy using now:

> Deploying ~/Code/private/awesome-static-analysis-ci/deploy

> Ready! https://deploy-sjbiykfvtx.now.sh (copied to clipboard) [2s]

> Initializing…

> Initializing…

> Building

> ▲ docker build

Sending build context to Docker daemon 2.048 kBkB

> Step 1 : FROM mre0/ci:latest

> latest: Pulling from mre0/ci

> ...

> Digest: sha256:5ad07c12184755b84ca1b587e91b97c30f7d547e76628645a2c23dc1d9d3fd4b

> Status: Downloaded newer image for mre0/ci:latest

> ---> 8ee1b20de28b

> Successfully built 8ee1b20de28b

> ▲ Storing image

> ▲ Deploying image

> ▲ Container started

> listening on 0.0.0.0:4567

> Deployment complete!

The last step was to add check.now.sh as a webhook inside the awesome-static-analysis project settings.

Now, whenever a new pull request is coming in, you see that little bot getting active!

Outcome and future plans

I am very pleased with my choice of tools: afterparty saved me from a lot of manual work, while zeit made deployment really easy.

It feels like Amazon Lambda on steroids.

If you look at the code and the commits for my bot, you can see all my little missteps, until I got everything just right. Turns out, parsing human-readable text is tedious.

Therefore I was thinking about turning the list of analysis tools into a structured format like YAML. This would greatly simplify the parsing and have the added benefit of having a machine-readable list of tools that can be used for other projects.

Update May 2018

While attending the WeAreDevelopers conference in Vienna (can recommend that), I moved the CI pipeline from zeit.co to Travis CI. The reason was, that I wanted the linting code next to the project, which greatly simplified things. First and foremost I don't need the web request handling code anymore, because travis takes care of that. If you like, you can compare the old and the new version.

Thanks for reading! I mostly write about Rust and my (open-source) projects. If you would like to receive future posts automatically, you can subscribe via RSS or email: